Users can access Splunk via the web browser and roles can be assigned to control access. Data is decoupled from the applications that produce it and it is centralised in a distributed system.

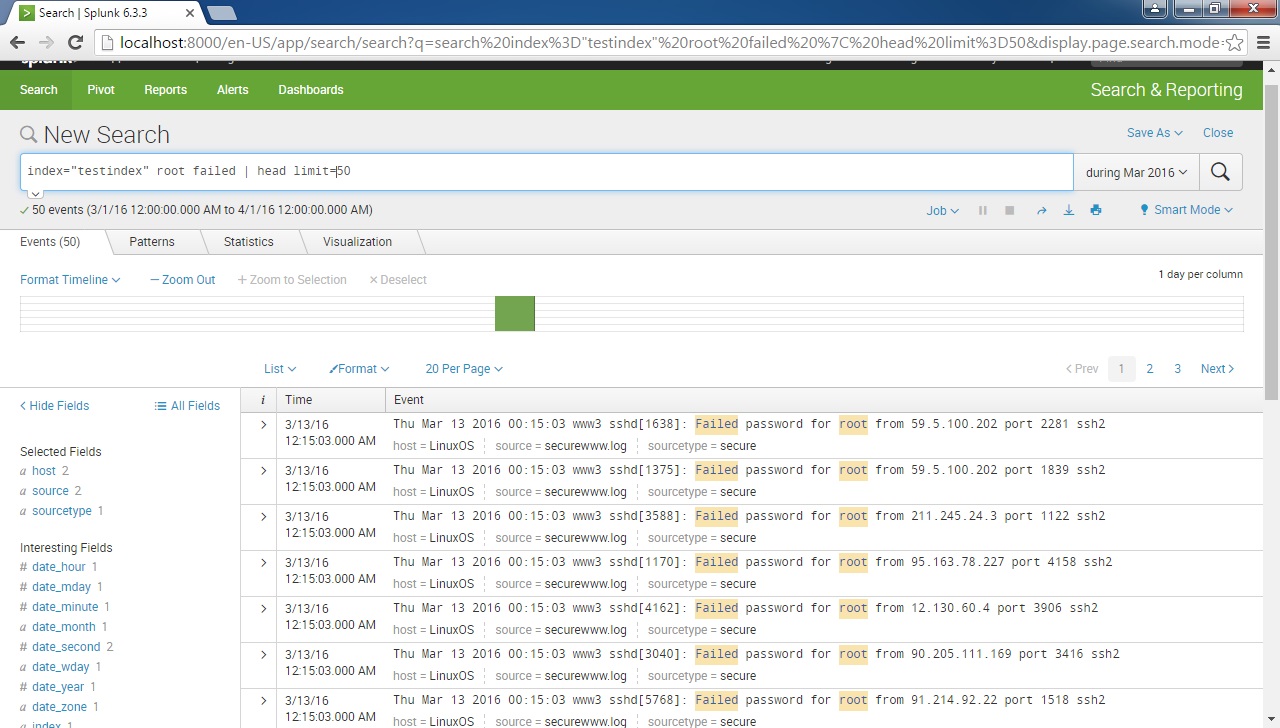

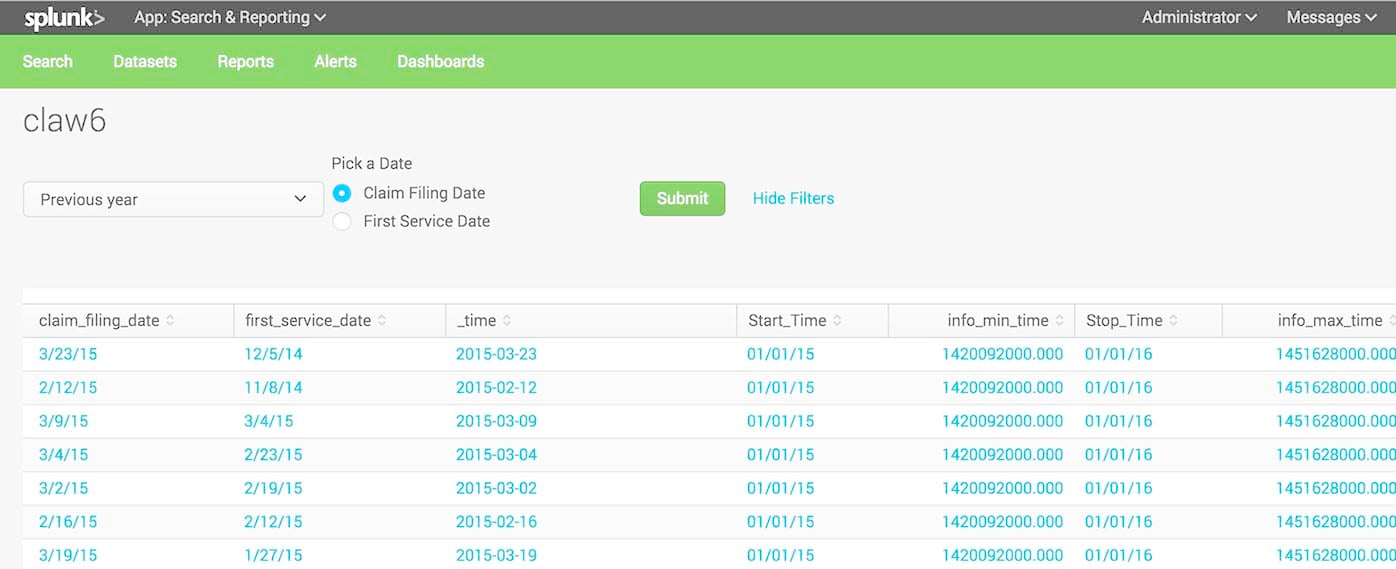

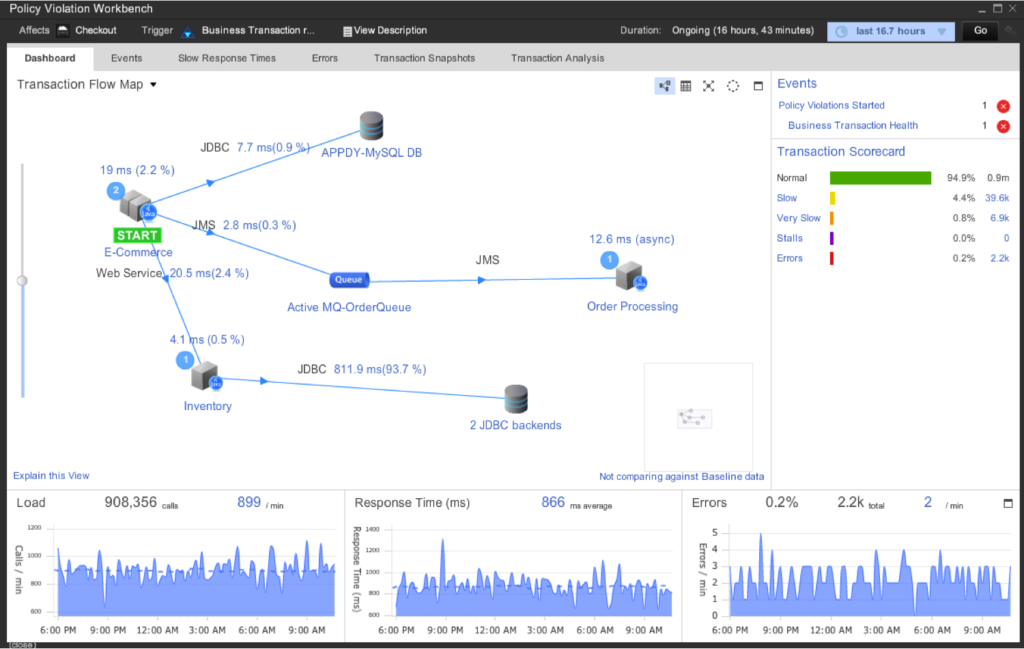

Splunk can acquire data that is sourced from programs, or manually uploaded files. A search head distributes search requests to indexers and merges the results for the user.An indexer stores and manages the data.A forwarder is an instance that sends data to an indexer or another forwarder.The Splunk ArchitectureĪ Splunk system consists of forwarders, indexers, and search heads. My experience is mainly with Splunk, but the approaches I cover in this post should be applicable to alternative solutions, such as the ELK stack. Disclaimer: I’m not at all a Splunk expert! That said, even with a rudimentary understanding I have found tremendous value in incorporating the use of Splunk into my daily workflow. I will cover how we can use it to search and interpret data, generate reports and dashboards, as well as pointing out features that have been very helpful for me as a developer. In this blog post I want to give an introduction to Splunk. A data platform could give insight into many aspects of a system, including: application performance, security, hardware monitoring, sales, user metrics, or reporting and audits. Since Splunk is intended to index massive amounts of machine data, it has a large scope of use cases. The idea of Splunk is to be a data platform that captures and indexes all this data so that it can be retrieved and interpreted in a meaningful way. Hopefully one of those ideas will help you out.Systems generate a lot of machine data from activity such as events and logs. your search before transaction | eval Login_Time=if(searchmatch("Received User-Agent header"),_time,null()) | eval Logout_Time=if(searchmatch("Session statistics - bytes in"),_time,null()) | transaction command and rest of search. rest of your search or reporting commands.Ģ.You could eval the start and end times before your transaction command in the search string, then when your transaction is built, the Login_Time and Logout_Times are added as fields to the transaction: beginning of your search and transaction | eval Login_Time=_time | eval Logout_Time=_time + duration |. This gives you a per-transaction Login_Time and Logout_Time. You can eval the end time to be _time + duration. The transaction command automatically assigns a duration field to each transaction. The time of the first event in the transaction is assigned to _time for the entire transaction. There are a few ways to do this, here are a couple that come to mind: However, do I put these two together to have both? Ideally, I would ask that Splunk add the fields _transaction_start_time and _transaction_end_time to the function, but that might be asking too much. I know that I could use the stats command to get the Earliest and Latest times, but I need the other fields in the output, so I need a transaction and that would get me: index=infrastructure sourcetype=syslog Session_Number="*" | stats earliest(_time) AS Login_Time, latest(_time) AS Logout_Time by Session_Number | convert ctime(Login_Time) ctime(Logout_Time)

However, I then want to use the Internal IP Address and start (logged in) and end (logged out) times and then use the data in a subsearch against other logs. Here is my search string, as is: index=infrastructure sourcetype=syslog Session_Number="*" | transaction Session_Number | fields Outside_IP, Client_Inside_IP, login_username I have already determined how I can get the identifying marks for the start and end events, the IP Addresses (all in different events - thank you) and I have created a transaction to group them together.

I am setting up a report of Username, Logged in time, Logged out time, Internal and External IP Addresses from a VPN node log.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed